The Silicon Mirage: Why India's $15 Billion Chip Dream Targets Yesterday's Bottleneck - and Where the Real AI Hardware Opportunity Lies

Authors:

Felix

Date:

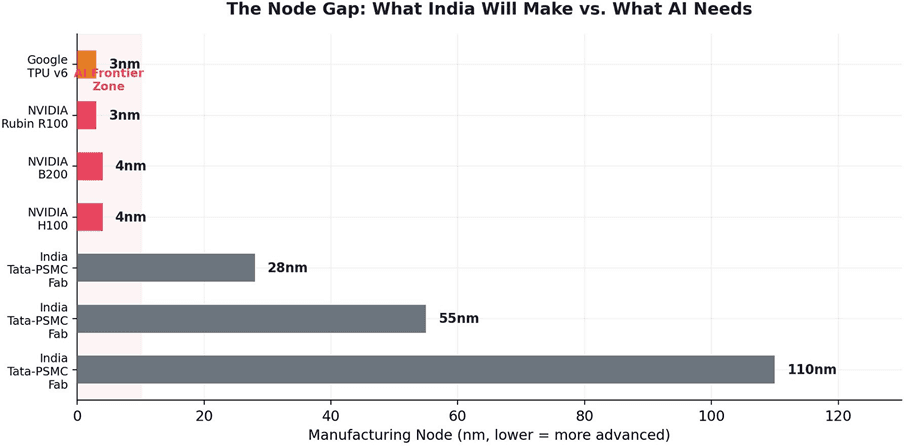

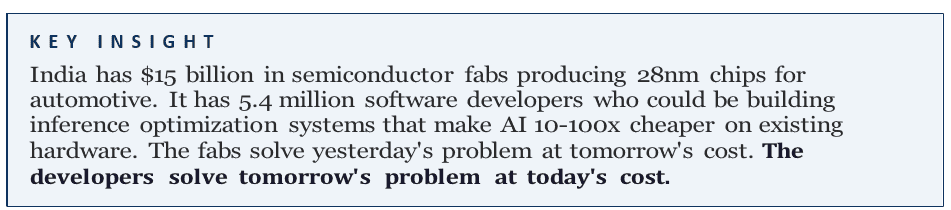

India's semiconductor mission has committed over $15 billion to building domestic chip fabrication - the nation's largest technology infrastructure bet in history. The Tata-PSMC fab in Dholera, Gujarat, reached 50% construction in early 2026 and will produce chips at 28-110nm nodes. This paper argues that this investment, while symbolically significant, targets yesterday's bottleneck. India's fabs will produce mature-node chips for automotive, IoT, and power management - not the frontier 3-5nm AI accelerators (H100s, B200s, TPUs) that the AI revolution actually requires. More critically, the AI hardware bottleneck itself has migrated. Between 2021 and 2024, the constraint was chip manufacturing. By 2025-2026, the binding constraints have shifted to high-bandwidth memory (HBM) - where only three companies worldwide can produce what AI needs and supply is sold out through 2026 - and energy infrastructure, where 50% of global data center projects face delays due to power grid limitations. India's $15 billion buys chips the AI industry doesn't need, at nodes that don't matter for AI, arriving after the bottleneck has moved. India's real AI hardware opportunity lies not in fabrication but in inference optimization software, edge AI deployment, energy-efficient data center architecture, and intelligent model orchestration - domains where India's world-class software talent creates a genuine competitive advantage.

1. India's Semiconductor Ambition

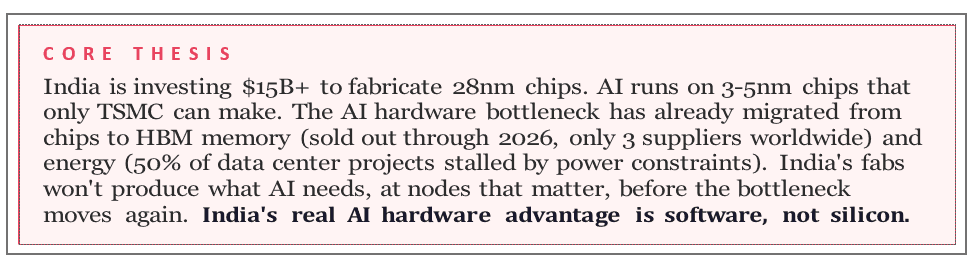

India's semiconductor mission represents the nation's most ambitious industrial technology bet. Under the India Semiconductor Mission (ISM), 10 projects have been approved: two fabrication plants and eight assembly, testing, marking, and packaging (ATMP/OSAT) facilities, with total investment commitments of approximately $17.3 billion.1The centerpiece is the Tata-PSMC fab in Dholera, Gujarat - an $11 billion joint venture that reached 50% construction in early 2026 and is targeting trial production by late 2026.2

Figure 1. India Semiconductor Mission investment allocation. The Tata-PSMC fab accounts for 60% of total spending. Sources: ISM; IEEE Spectrum; India Briefing.

The ambition is understandable. India's semiconductor market was worth $38 billion in 2023 and is projected to reach $100-110 billion by 2030.3 Reducing import dependence is a legitimate strategic goal. But this paper asks a different question: does building fabs at 28-110nm nodes solve India's AI hardware problem? The answer is no - and the reasons are structural, not cyclical.

1IEEE Spectrum, "India Injects $15 Billion Into Semiconductors" (2024). Tata-PSMC fab: 28-110nm nodes, 50K wafers/month.

2India Semiconductor Mission / MeitY (March 2026). 10 approved projects: 2 fabs + 8 ATMP/OSAT. Investment commitments: ~$17.3B.

3Dholera Times (April 2026). Tata fab at 50% construction. Trial production late 2026. Nodes: 28nm, 40nm, 55nm, 90nm, 110nm.

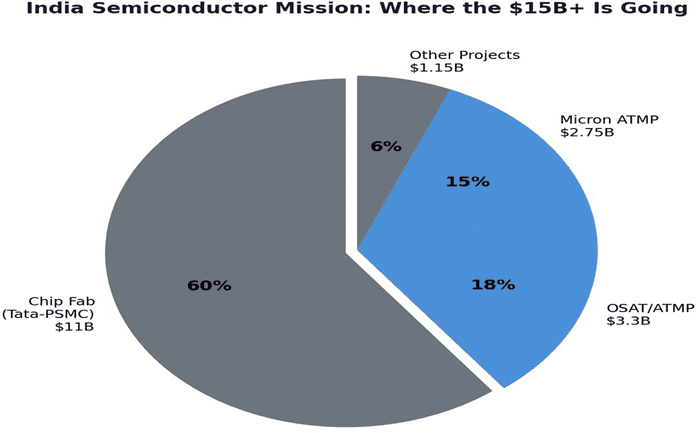

2. The Node Gap: What India Will Make vs. What AI Needs

The Tata-PSMC fab will produce chips at 28nm, 40nm, 55nm, 90nm, and 110nm - mature nodes used in automotive, display drivers, microcontrollers, and power management ICs. These are important products. They are also completely irrelevant to AI compute.

4TheAIacceleratorsthatpowerfrontierAI-NVIDIA'sH100,B200,andtheupcoming RubinR100,Google'sTPUv6,AMD'sMI300X-aremanufacturedat3-5nmbyTSMC inTaiwan.ThegapbetweenIndia'splanned28nmcapabilityandthe3nmfrontieris not a gap that closes with time. It is seven to nine technology generations apart. TSMC spent decades and hundreds of billions of dollars reaching 3nm. India's fab will not bridge this gap in any foreseeable timeline.

Figure 2. The Node Gap. India's fabs will produce at 28-110nm. AI accelerators require 3-5nm. The gap is 7-9 technology generations. Sources: IEEE Spectrum; NVIDIA; TSMC.

This does not diminish the value of a 28nm fab for India's broader electronics ecosystem. Mature-node chips are used in billions of devices and were at the heart of the pandemic-era chip shortage. But framing this investment as solving India's AI hardware challenge - as it is frequently framed in policy discourse - is a category error.

4Tech Insider, "The AI Data Center Power Crisis" (April 2026). 11 GW stalled without construction; 50% of projects delayed by power.

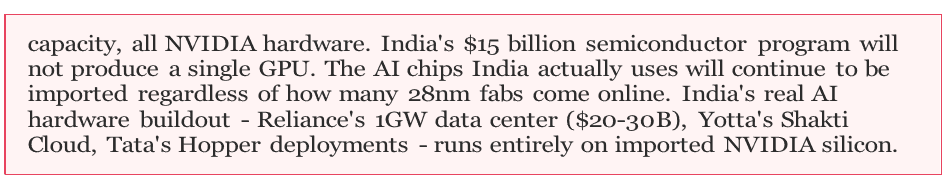

3. The Migrating Bottleneck

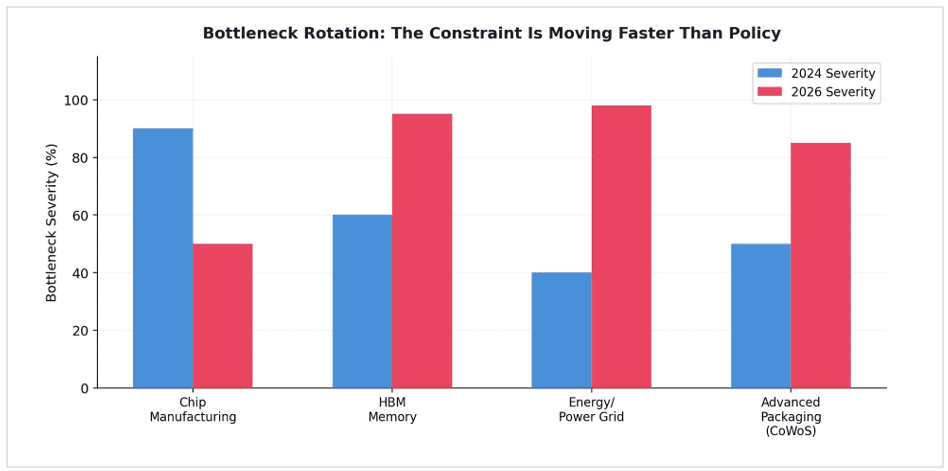

EvenifIndiacouldfabricatefrontierAIchips,thebottleneckhasalreadymoved.The AI hardware constraint is not a single, fixed problem. It is a migrating target that shifts faster than policy can follow.

Figure 3. The Migrating Bottleneck. The primary AI hardware constraint has shifted from chip manufacturing (2021-2022) to HBM memory (2025) to energy/power (2026). India's fabs target the 2021-2022 constraint. Sources: EnkiAI; TrendForce; Tech Insider.

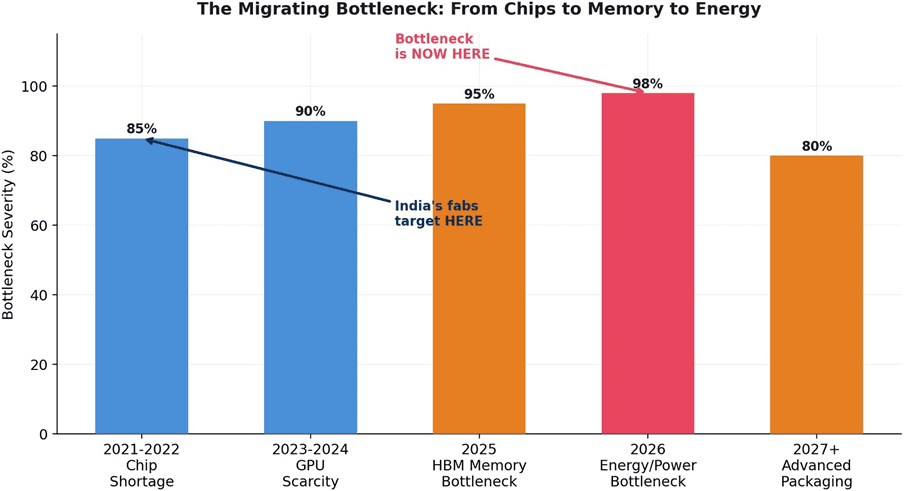

3.1 The HBM Memory Wall

High-bandwidth memory (HBM) has become AI's critical bottleneck. NVIDIA's Blackwell B200 GPU uses 192GB of HBM3E - a 140% increase from the H100's 80GB.5 Only three companies in the world manufacture HBM: SK Hynix, Samsung, and Micron. Micron's HBM capacity is sold out through calendar year 2026.6 HBM demand is growing 130% year-over-year, with DRAM prices projected to rise over 70% in 2026.7

5Introl, "The AI Memory Supercycle" (Jan 2026). Micron HBM sold out through 2026. NVIDIA B200: 192GB HBM3E (140% increase from H100).

6TrendForce, "Memory Wall Bottleneck" (Jan 2026). HBM demand growing 130% YoY. DRAM prices projected to rise 70%+ in 2026.

7EnkiAI, "AI Power Grid Bottleneck: The 2026 Crisis" (April 2026). Primary risk is no longer chips but structural grid inability.

Figure 4. The HBM supply-demand gap. Demand growing 130% YoY vs. constrained supply. Only 3 manufacturers worldwide. Sources: TrendForce; Introl; Micron.

Samsung has struggled to meet NVIDIA's qualification standards for its 12-layer HBM3E chips, relegating the world's largest memory maker to a tertiary position.8 DRAM supply currently supports only about 15 gigawatts of AI infrastructure globally.9 This is not a chip problem. It is a memory problem - and India's semiconductor program does not address it.

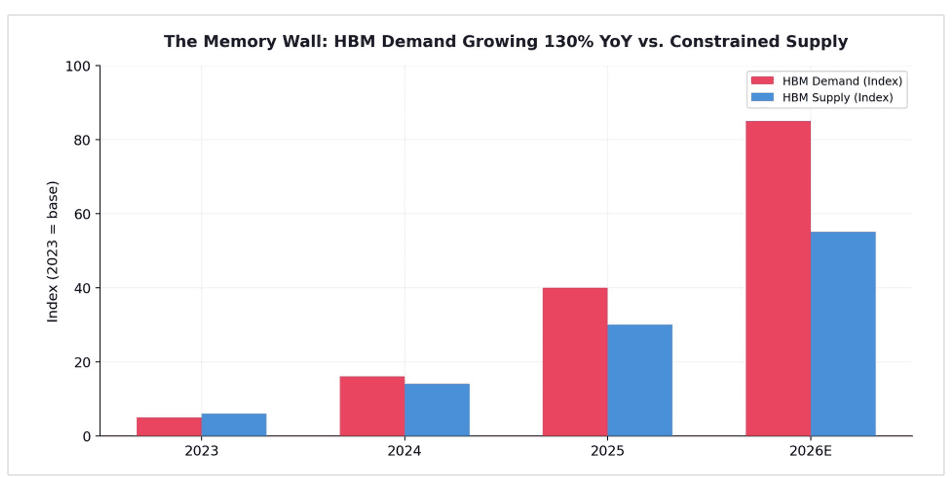

3.2 The Energy Wall

The most consequential bottleneck shift is from silicon to energy. As one analyst report concluded: 'The primary risk to AI expansion is no longer the availability of semiconductor chips, but the structural inability of electrical grids to meet the sector's exponential power demand.'10

8Clarifai, "GPU Shortages 2026" (Jan 2026). DRAM supply supports only ~15 GW of AI infrastructure. Lead times: 36-52 weeks.

9EnkiAI, "HBM Supply Crisis 2026" (Feb 2026). Only 3 companies make HBM. Samsung struggling with yield. SK Hynix at 80% yield.

10Carnegie Endowment, "India's Semiconductor Mission: The Story So Far" (Aug 2025). 10 projects approved across multiple states.

Figure 5. The Energy Gap. AI data center power demand is outstripping available grid capacity, with the deficit widening through 2028. Sources: Tech Insider; IEA; Sightline Climate.

Up to 11 gigawatts of planned data center capacity for 2026 remains stalled without construction underway, with 50% of global projects facing delays due to power limitations and grid equipment shortages.11 Grid interconnection timelines now stretch to seven years or more. Hyperscalers are building their own dedicated power generation to bypass the public grid entirely. A single hyperscale AI data center requires 100-300 megawatts of continuous power - equivalent to a mid-sized city.

11Medium/elongated_musk, "The Next Five Years of Memory" (Oct 2025). HBM4 sampling 2025, volume production mid-2026.

Figure 6. Bottleneck Rotation. Chip manufacturing severity declined from 90% to 50% (2024-2026) whileenergysurgedfrom40%to98%andHBMfrom60%to95%.Policyischasingamovingtarget.

Source: author framework.

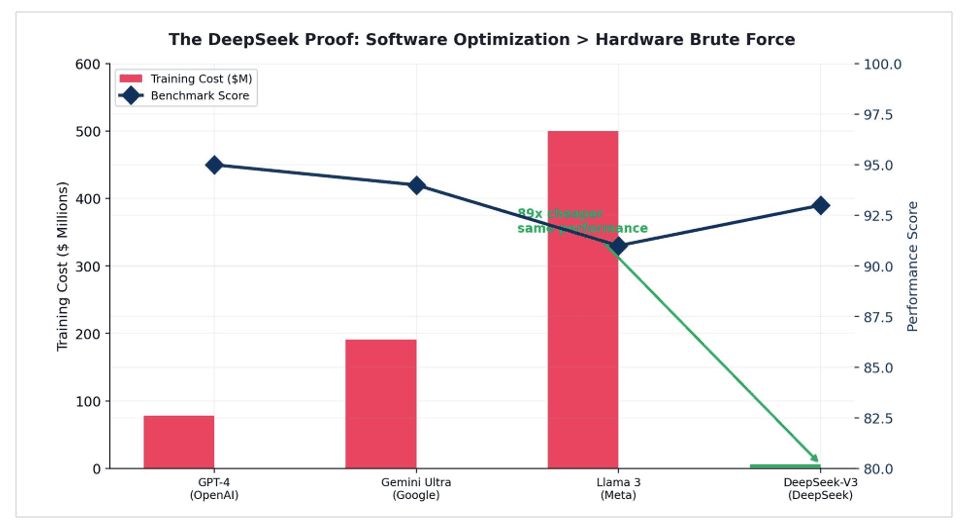

4. The DeepSeek Proof: Software Beats Silicon

The most powerful rebuttal to hardware-centric AI strategy came not from a policy paper but from a laboratory in Hangzhou. DeepSeek-V3, a 671-billion-parameter model,wastrainedfor$5.6milliononH800chips-hardwarespecificallydesignedto be less powerful than the H100s available to Western labs - and achieved performance parity with GPT-4o.12

Figure 7. The DeepSeek Proof. DeepSeek-V3 matched frontier performance at 89x lower training cost, using restricted hardware. Software optimization outweighs hardware brute force. Sources: FinancialContent; DeepSeek technical report.

DeepSeek proved that software innovation can effectively subsidize hardware deficiencies. Its Mixture-of-Experts architecture activates only 37 billion of 671 billion parameters per token, achieving frontier intelligence with a fraction of the compute. This architectural ingenuity - not more GPUs, not faster chips - was the decisive factor.

The implication for India is direct: the country's world-class software engineering talent is a more strategically valuable AI hardware asset than any fab. Inference optimization, model routing, KV cache compression, and edge deployment are software problems - and India has 5.4 million software developers, the second-largest pool in the world.

12Digitimes (Feb 2026). India semiconductor drive "advancing unevenly" - packaging faster, wafer fab facing timeline risks.

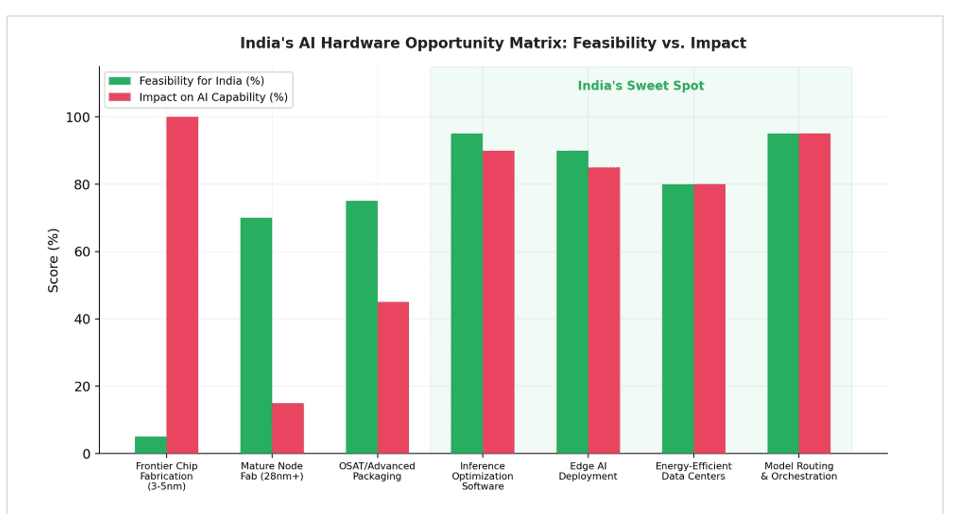

5. India's Real AI Hardware Opportunity

India's competitive advantage in AI hardware is not in fabrication. It is in the layers above the chip that determine how efficiently AI compute is used.

Figure 8. India's AI Hardware Opportunity Matrix. Frontier chip fab scores 5% feasibility. Inference optimization and model orchestration score 95% feasibility and 90%+ AI impact. Source: author framework.

5.1 Inference Optimization

Inference - not training - is where 85% of AI compute spend occurs. Optimizing how models serve queries is a software challenge that India is uniquely positioned to solve. Multi-model routing, semantic caching, KV cache compression, quantization, and prompt optimization can reduce inference costs by 50-93% without changing the underlying hardware. This is India's natural competitive terrain.

5.2 Edge AI Deployment

India has 700 million smartphone users. Deploying lightweight AI models directly on devices - bypassing cloud infrastructure entirely - eliminates the energy, HBM, and GPU bottlenecks simultaneously. On-device models like Redrob 2B, designed to run on any smartphone, represent a path to AI access that requires no fabs, no data centers, and no imported GPUs. India's GPU market is just $485 million - tiny compared to China's $1.82 billion. But India's smartphone market is among the world's largest. The real AI hardware opportunity for India is not fabricating chips for data centers but optimizing AI to run on the devices already in every pocket.

5.3 Energy-Efficient Data Center Architecture

India has the world's fourth-largest renewable energy capacity. Designing AI data centers optimized for India's climate and energy mix - leveraging solar power, innovative cooling for tropical environments, and power-efficient inference hardware - is a genuine infrastructure opportunity that aligns with the actual bottleneck (energy) rather than the perceived one (chips).

5.4 OSAT and Advanced Packaging

India's OSAT/packaging sector is progressing faster than fabrication, with facilities already in pilot production.13 Advanced packaging - including chiplet integration,

2.5D/3D stacking, and system-in-package - is emerging as a critical bottleneck that India can realistically address. Unlike frontier fab, which requires decades of accumulated knowledge, advanced packaging is a newer field where India can build competitive capability within 3-5 years.

13India Briefing (March 2026). Indian semiconductor market: $38B (2023), expected $100-110B by 2030.

6. Conclusion

India's semiconductor mission is building something real and valuable - a domestic mature-node chip ecosystem that reduces import dependence for automotive, IoT, and consumer electronics. This matters. But it does not solve India's AI hardware problem, and framing it as such creates a dangerous policy illusion: the belief that fabrication sovereignty equals AI sovereignty.

The numbers tell the story. India has 80,000+ GPUs for AI - all imported. Yotta controls 60-70% of this capacity, all NVIDIA hardware. Reliance is building a 1GW AI data center with NVIDIA Blackwell GPUs at an estimated $20-30 billion investment. Every major Indian AI initiative runs on imported silicon. India's $15 billion semiconductor program will not change this, because its fabs produce at 28-110nm while AI runs on 3-5nm chips that only TSMC can manufacture.

Meanwhile, the AI hardware bottleneck has migrated from chips (2021-2024) to HBM memory and energy infrastructure (2025-2026). India's fabs produce at nodes 7-9 generations behind what AI requires. HBM is manufactured by three companies, none Indian. Energy grid constraints are stalling 50% of global data center projects. These are the binding constraints on AI deployment - and $15 billion in 28nm fabs addresses none of them.

India's real AI hardware advantage is not silicon. It is software. DeepSeek proved that architectural ingenuity on restricted hardware can match hundred-million-dollar training runs on unrestricted hardware. India's 5.4 million developers, working on inference optimization, model routing, edge deployment, and energy-efficient AI architectures, represent a more powerful AI hardware strategy than any fab. The chip matters less than what you do with it. And what you do with it is a software problem - one that India is uniquely equipped to solve.

About Redrob Labs

Redrob (redrob.io) builds large language models and AI tools for the next three billion users. Founded in 2018, the company operates a proprietary multi-model ensemble architecture delivering 90% of frontier performance at 5% of the cost - purpose-built for the unit economics of India and emerging markets. Headquartered in New York with offices in Seoul, New Delhi, and Mumbai. Backed by world-class VC firms.

Redrob Labs is the research division of Redrob. Our work is published at redrob.io/research.