How AI Is Creating the Largest Wage Arbitrage in Modern Labor History - and Why It Rewards Domain Experts, Not Engineers

Authors:

Felix Kim & Redrob Research Labs

Date:

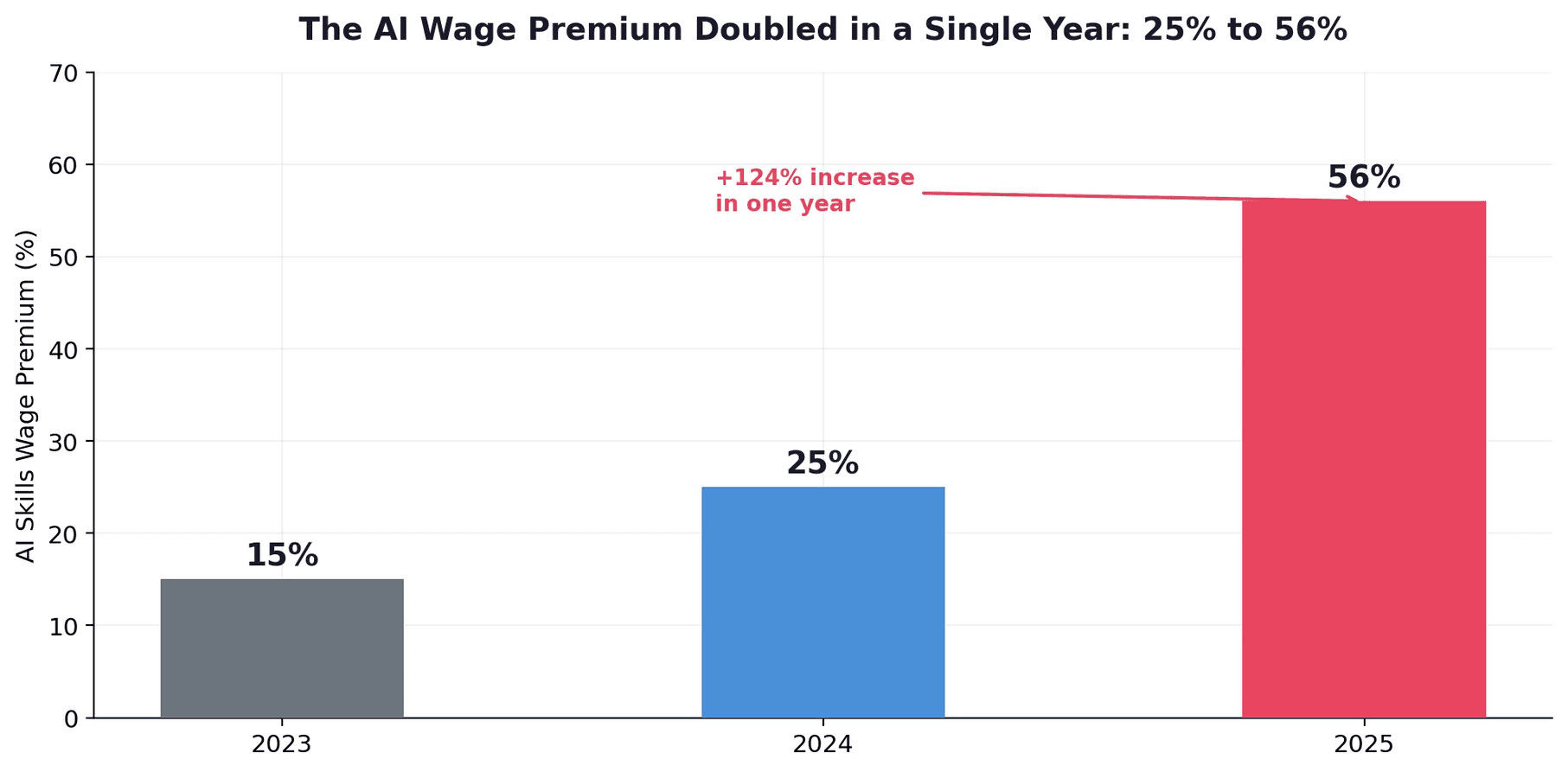

PwC's 2025 Global AI Jobs Barometer — the largest study of its kind, analyzing nearly one billion job postings across six continents — found that workers with AI skills earn a 56% wage premium over workers in identical roles without those skills. This premium doubled from 25% in a single year. The conventional interpretation is that this data supports the "learn AI engineering" career narrative. This paper argues the opposite.

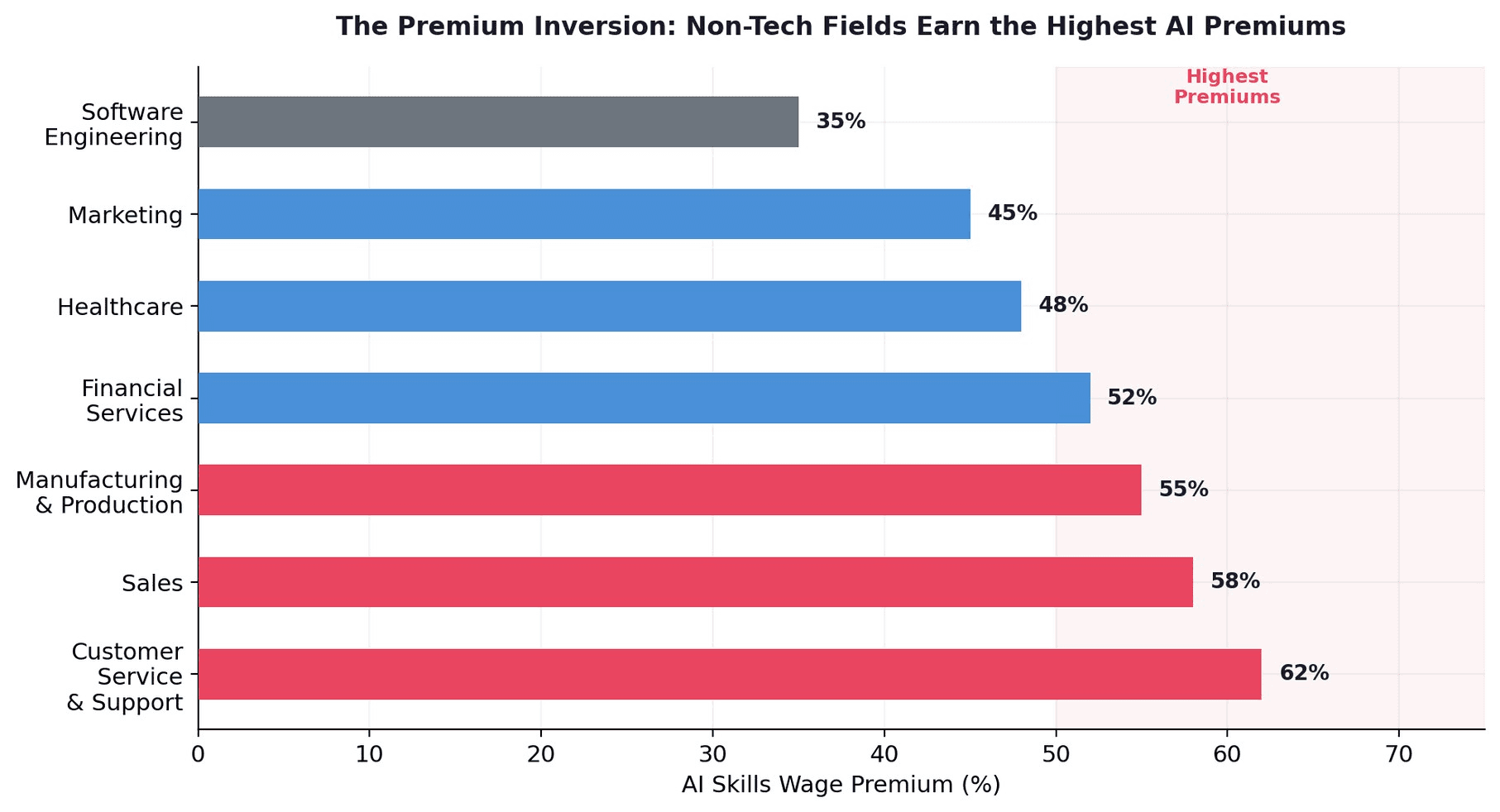

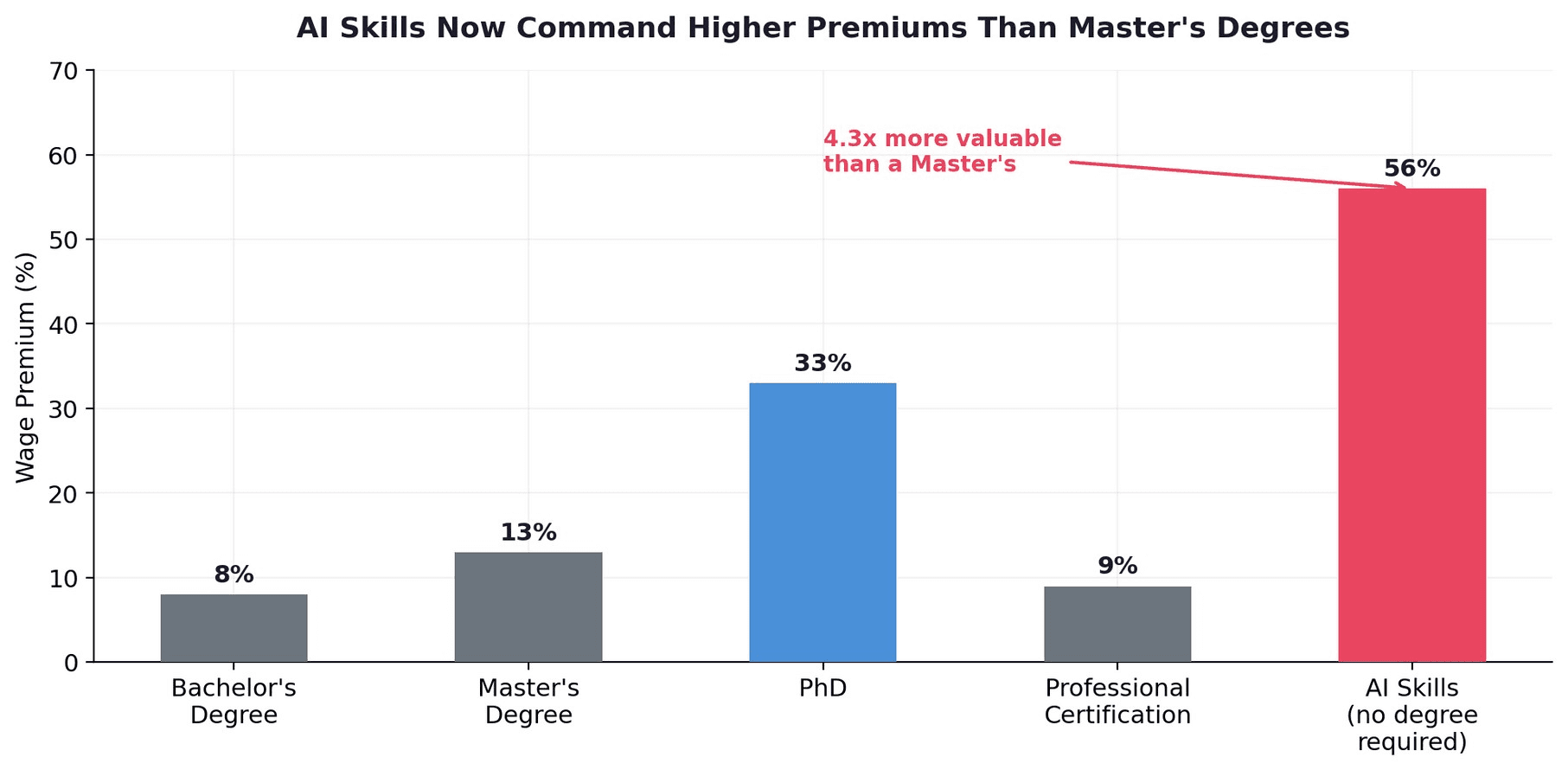

The 56% premium is not primarily an AI engineering phenomenon. It is a domain expert phenomenon. The fields with the largest AI premiums are customer service, sales, and manufacturing — not software engineering, which shows one of the lowest AI premiums. AI skills now command higher wage premiums than master's degrees (56% vs. 13%). Degree requirements are falling fastest in AI-exposed jobs. And the premium widens dramatically with seniority: 6% at entry level, exceeding 70% at the C-suite.

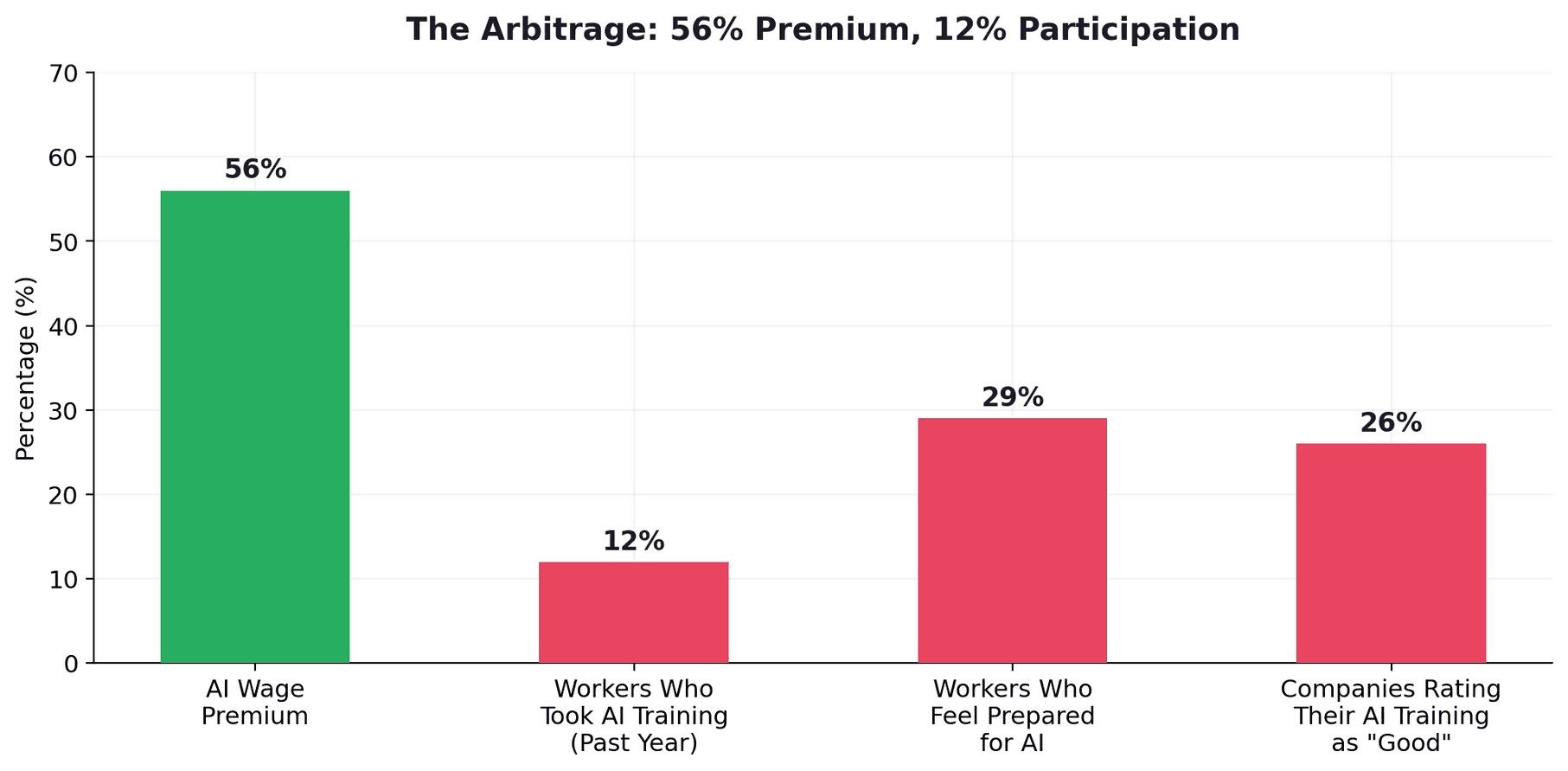

Yet only 12% of employed adults have taken any AI training in the past year, and 74% rate their employer's AI training programs as "average to poor." The result is the largest wage arbitrage in modern labor history: a 56% premium available to anyone who develops AI competency in their existing domain, with 88% of the workforce not yet competing for it.

CORETHESIS

The 56% AI wage premium is not for AI engineers. It is for anyone with AI skills - and it is highest in non-technical fields like customer service (62%), sales (58%), and manufacturing (55%). AI skills now outvalue master’s degrees (56% vs 13%). Only 12% of workers are competing for this premium. The largest wage arbitrage in modern labor history is not in learning to build AI. It is in learning to use AI in the job you already have.

1. The 56% Premium

In June 2025, PwC published its Global AI Jobs Barometer — an analysis of nearly one billion job postings from six continents and thousands of company financial reports. The headline finding: workers with AI skills earn a 56% wage premium over workers in identical roles who lack those skills. The premium had more than doubled from 25% the prior year. Jobs requiring AI skills grew 7.5% even as total job postings fell 11.3%. This is not a niche finding. It is the single largest skill-based wage premium in the modern labor market.

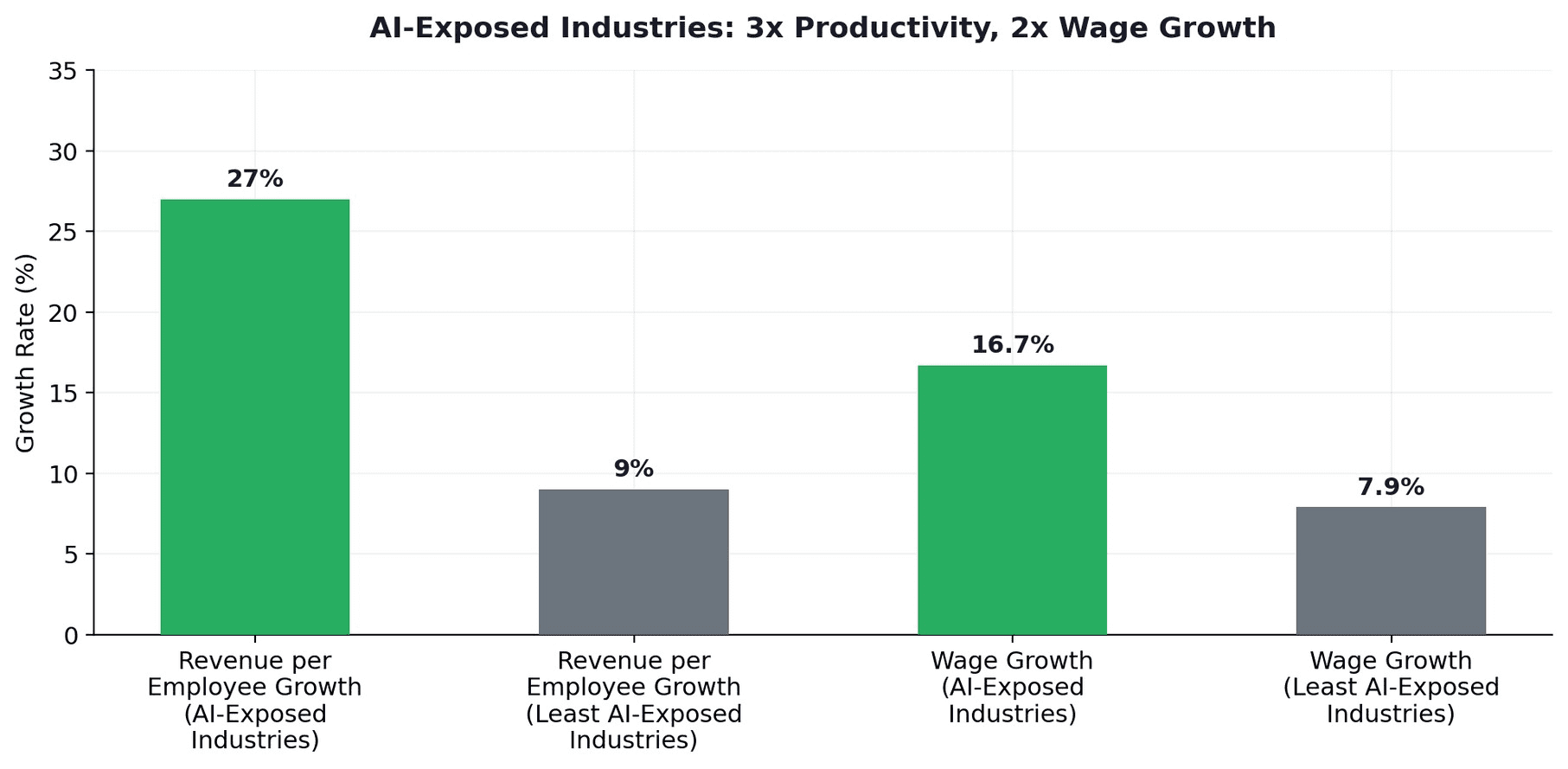

The productivity data explains why companies pay this premium. Industries most exposed to AI saw 3x higher growth in revenue per employee (27%) compared to those least exposed (9%). Wages are growing twice as fast in AI-exposed industries. Companies are not paying the premium because AI skills are trendy. They are paying because AI-skilled workers generate measurably more revenue per person.

2. The Premium Inversion: It's Not Where You Think

The counterintuitive finding is where the premium is largest. According to CNBC's analysis of Lightcast labor data, the fields with the biggest AI salary premiums are not software engineering or data science. They are customer service and support, sales, and manufacturing and production. Software engineering — the field everyone associates with AI — actually shows one of the lowest AI premiums, because the baseline salary is already high and AI skills are already expected.

The logic is straightforward but underappreciated. A customer service agent who can orchestrate AI workflows to resolve 3x more tickets per hour creates enormous incremental value relative to their baseline salary. A marketing manager who uses AI to generate, test, and optimize campaigns at 10x the speed of manual processes delivers disproportionate ROI. In contrast, a software engineer who adds AI skills to an already-technical role sees a smaller percentage lift because their baseline is higher and the incremental productivity gain is less dramatic.

Domain specialists with AI skills earn 30-50% more than generalist AI engineers at equivalent experience levels. The market is clear: the most valuable AI skill is not building AI. It is applying AI to a specific domain where you already have expertise.

2.1 The Data Is Unambiguous: 51% of AI Jobs Are Outside Tech

Lightcast's analysis of 1.3 billion job postings provides the definitive evidence. As of 2024, 51% of all job postings requiring AI skills are outside IT and computer science occupations. Since ChatGPT's launch in 2022, job postings mentioning generative AI skills grew 800% for non-tech roles. HR leads all sectors with a 66% growth rate in AI skill demand. Marketing and PR postings requiring AI skills are growing at 50% annually. Education — currently at the lowest AI adoption — is seeing 200% growth in generative AI requirements.

The salary data compounds: one AI skill adds 28% ($18,000/year). Two or more AI skills add 43%. In HR and non-tech fields specifically, AI literacy alone drives a 35% salary uplift. In marketing and sales, applied AI skills trigger average pay bumps of approximately 43%, with senior specialists earning up to $250,000 in total compensation.

2.2 AI Has Left the Engineering Department

Perhaps the most striking finding is that 51% of AI-related job postings are now outside traditional IT and computer science roles. Since ChatGPT launched in 2022, job postings mentioning generative AI skills grew 800% for non-tech roles. LinkedIn's own job-market analysis confirms that a large share of AI-related postings are now for marketing, sales, HR, and operations — roles where AI literacy is the differentiator rather than deep coding.

The counterintuitive career advice: Do not quit your job to become an AI engineer. Learn AI skills for the job you already have. A marketing manager who masters prompt engineering and AI-assisted campaign optimization earns a larger percentage salary increase than an ML engineer who learns the same skills. The premium rewards domain expertise + AI augmentation, not AI expertise alone.

3. AI Skills Are Replacing Degrees as the Primary Value Signal

The AI premium now exceeds the premium for traditional educational credentials. The Oxford Internet Institute found that AI skills provide a 23% wage premium in the UK — nearly double the value of a master's degree (13%) and approaching PhD-level premiums (33%). The global PwC figure of 56% dwarfs all traditional credential premiums.

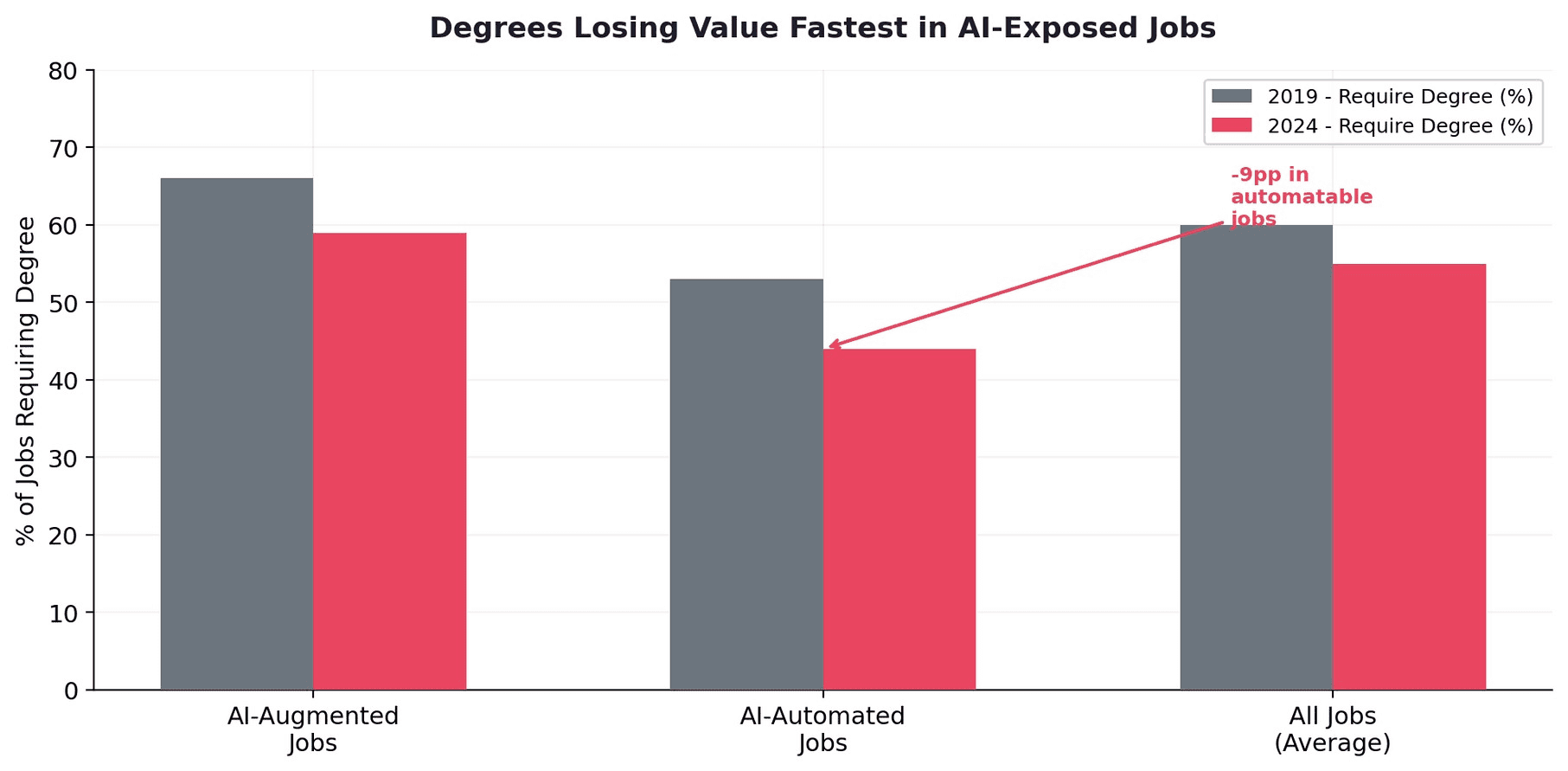

Simultaneously, employer demand for formal degrees is declining fastest in AI-exposed jobs. The percentage of AI-augmentable jobs requiring a degree fell from 66% to 59% between 2019 and 2024. For AI-automatable jobs, the decline was even steeper: 53% to 44%.

This creates a profound shift in the returns on human capital investment. A two-year master's degree costing $50,000-$150,000 yields a 13% wage premium. Six months of focused AI skills development — often available for under $5,000 through online platforms — yields a 56% premium. The ROI of AI skills investment exceeds traditional education by an order of magnitude.

4. The Seniority Multiplier

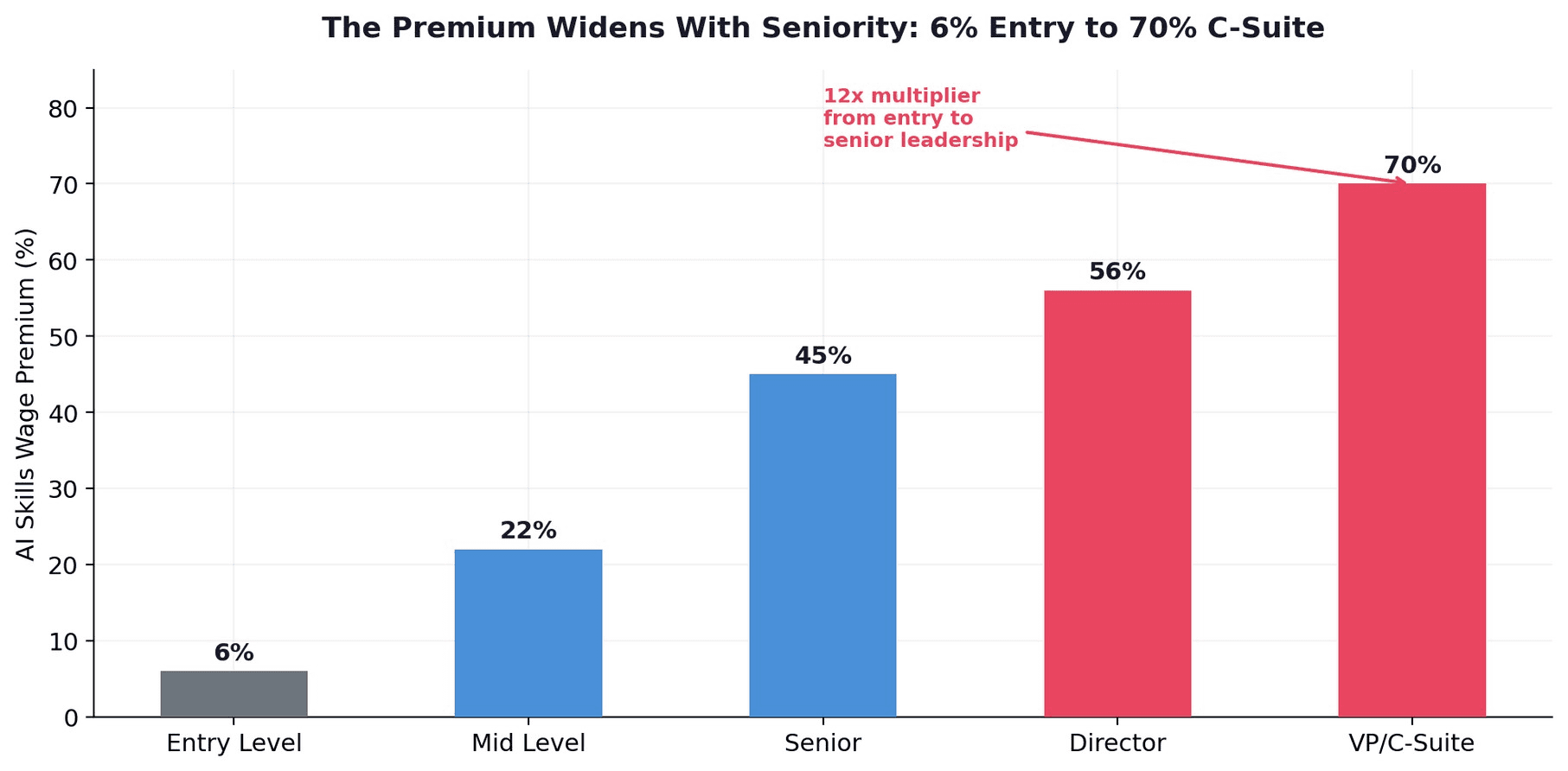

The AI premium does not apply equally across career stages. It widens dramatically with seniority: 6% at entry level, 22% at mid-level, 45% at senior level, 56% at director level, and exceeding 70% at the VP and C-suite level.

The implication is that AI skills are most valuable not for entry-level workers seeking their first job, but for experienced professionals in senior roles. A VP of Marketing who develops AI fluency can command a 70% premium over a peer without AI skills. A Chief Revenue Officer who can orchestrate AI-powered sales workflows captures the largest absolute dollar premium in the entire labor market. The career advice "learn AI" is correct — but the target audience is wrong. It should be directed at senior leaders, not junior engineers.

5. The Arbitrage: 56% Premium, 12% Participation

Despite the 56% premium, only approximately 12% of employed adults have taken any AI-related training in the past year. Among workers who identified AI as their biggest skill gap, 74% rated their employer's AI training programs as "average to poor." Over a third of workers lack confidence they have the skills to succeed in their current roles, and 41% worry their job security is at risk. Yet 90%+ are not taking action.

This is a textbook wage arbitrage. The premium exists because AI-skilled workers generate measurably more value (3x productivity growth in AI-exposed industries). The premium persists because supply is constrained (1.3 million AI job openings vs. 645,000 available workers in the US alone). And the premium is accessible to anyone in any domain — not just engineers. By 2030, 70% of the skills used in most jobs will change, with AI as the primary driver. The window for maximum premium capture is now, before AI skills become table stakes.

6. The Skills Earthquake

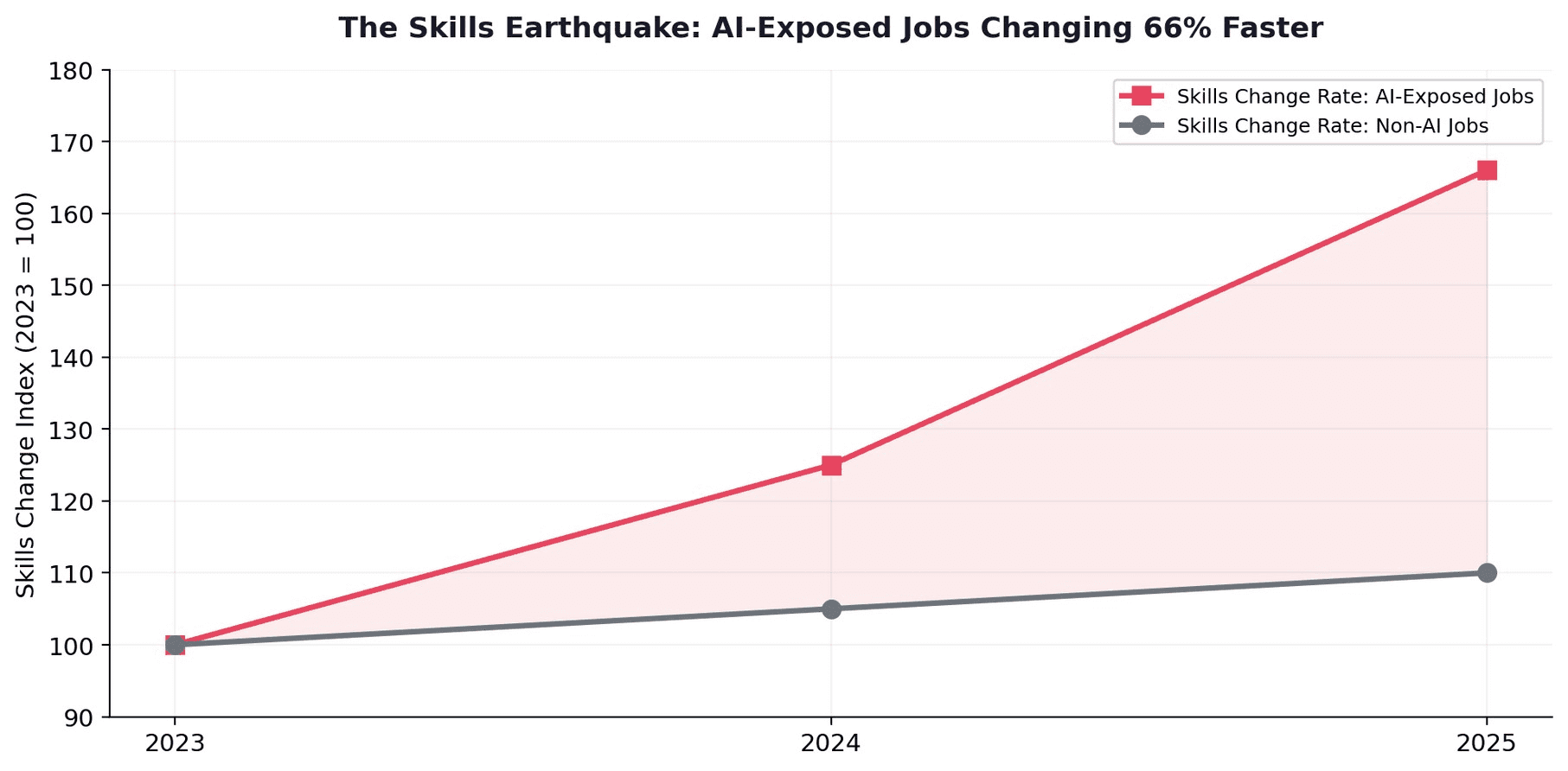

The skills required for AI-exposed jobs are changing 66% faster than for non-AI jobs, up from 25% faster the prior year. This acceleration is not slowing. It is compounding.

One gender dimension deserves attention: in every country PwC analyzed, more women than men are in AI-exposed roles. This suggests the skills pressure facing women will be higher — and the premium opportunity greater, if captured.

Confounding the "AI will destroy jobs" narrative, jobs are actually growing in virtually every type of AI-exposed occupation — including highly automatable ones. AI-exposed job postings grew 38% year-over-year. AI is not eliminating jobs. It is restructuring them — and paying a 56% premium to the workers who restructure fastest.

6.1 The Adoption Divide: 84% of Developers vs. 9% of Enterprises

The adoption divide reveals the arbitrage most clearly. Stack Overflow's 2025 survey found that 84% of software developers are already using or planning to use AI tools in their workflows. Microsoft and LinkedIn's 2024 Work Trend Index documented a 142x increase in members adding AI skills to profiles and a 160% increase in non-technical professionals taking AI courses. Yet Gartner found only 9% of organizations have reached anything close to AI maturity. The individual is moving faster than the institution.

A KPMG study found that 44% of US employees use AI tools at work secretly, without management approval. The shadow AI economy is already here. The workers capturing the 56% premium are not waiting for permission.

7. Implications for Employers and Emerging Markets

7.1 For Employers

The 56% premium represents both a cost and an opportunity. Companies paying the premium for external AI hires could instead invest a fraction of that amount in upskilling their existing workforce. The most effective strategy is not to hire AI engineers for every function but to create AI-augmented versions of the domain experts you already employ. A customer service team trained in AI orchestration delivers more value than an AI engineering team that does not understand customer service.

7.2 For Emerging Markets

For emerging markets, the 56% premium represents a leapfrog opportunity. India has 490,000+ new AI jobs annually — the largest growth in any developing market. Nasscom estimates India still needs over one million more trained AI professionals by 2026. AI and Machine Learning Specialist roles in India grew 176% year-over-year.

The geographic arbitrage is powerful: a marketing manager in Mumbai who masters AI workflow orchestration can access global salary premiums via remote work while living in a lower-cost market. The declining relevance of formal degrees means the premium is accessible to workers without traditional educational credentials — precisely the demographic that dominates emerging market workforces.

For a customer support agent in Mumbai, a marketing coordinator in Lagos, or a supply chain manager in Jakarta, the path to a 56% raise is not a computer science degree. It is six months of focused AI skills development applied to the work they already do.

7.3 The Salary Inversion

One emerging phenomenon deserves attention: at some organizations, junior AI professionals ($173,500 total compensation) are now exceeding director-level averages ($152,600). This "salary inversion" reflects the acute scarcity of hands-on AI deployment skills. Companies are paying more for a junior engineer who can deploy AI models in production than for a senior manager who oversees teams but lacks AI fluency. The implication for HR strategy is clear: the traditional seniority-based compensation model breaks down in the AI era. Skills, not titles, determine value.

8. Conclusion

The 56% premium is the single most important data point in the 2026 labor market. It is larger than any traditional credential premium. It is available in every industry. It is largest in non-technical domains — customer service (62%), sales (58%), manufacturing (55%). It stacks: one AI skill yields 28%, two yield 43%, advanced fluency yields 56%. It widens dramatically with seniority: 6% at entry level to 70%+ at the C-suite. And it is being captured by only 12% of the workforce while 51% of AI job postings now sit outside traditional IT roles entirely.

This is not an AI engineering story. It is a domain augmentation story. Since ChatGPT launched, non-tech AI job postings grew 800%. HR professionals with AI literacy see 35% salary uplifts. Marketing managers with applied AI skills see 43% bumps. The highest-value career move in 2026 is not learning to build AI. It is learning to use AI in the field where you already have expertise.

The window is open. The arbitrage is live. And 88% of the workforce has not yet shown up to collect.About Redrob Labs: Redrob (redrob.io) builds large language models and AI tools for the next three billion users. Founded in 2018, headquartered in New York with offices in Seoul, New Delhi, and Mumbai.

Citation: Redrob Labs (2026). "The 56% Premium: How AI Is Creating the Largest Wage Arbitrage in Modern Labor History." Redrob White Paper v1.0. Available at: redrob.io/research.

About Redrob Labs:

Redrob (redrob.io) builds large language models and AI tools for the next three billion users. Founded in 2018, the company operates a proprietary multi-model ensemble architecture delivering 90% of frontier performance at 5% of the cost - purpose-built for the unit economics of India and emerging markets. Headquartered in New York with offices in Seoul, New Delhi, and Mumbai. Backed by world-class VC firms. Redrob Labs is the research division of Redrob. Our work is published at redrob.io/research.