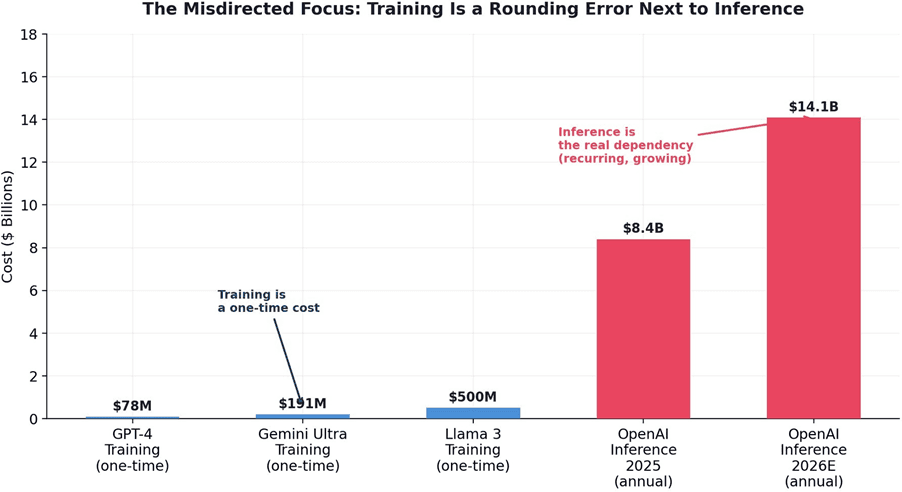

The AI Sovereignty Trap: Why 50 Nations Are Spending $100 Billion on the Wrong Problem

Authors:

Felix Kim & Redrob Research Labs

Date:

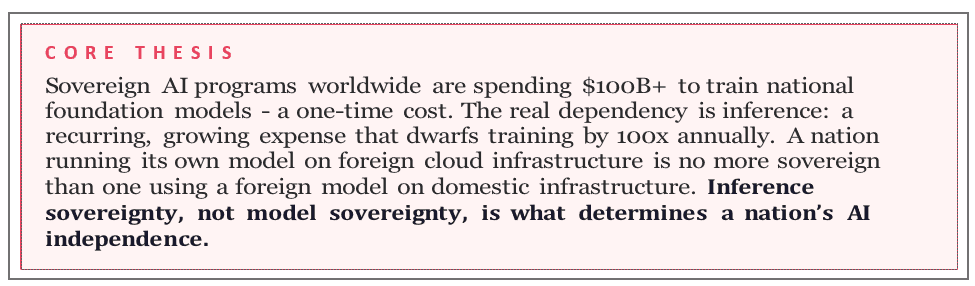

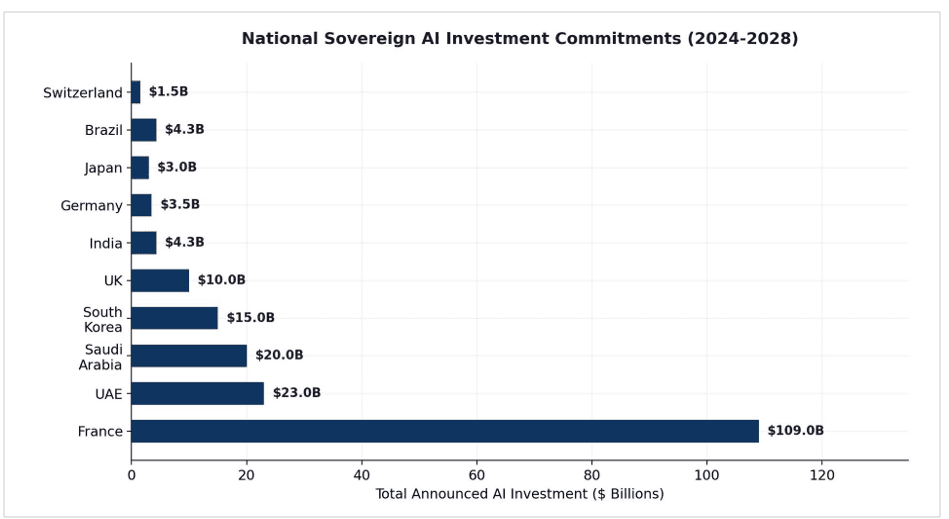

By January 2026, more than 130 government-backed sovereign AI projects were underway across 50+ countries. France alone has committed 109 billion euros. The stated goal is AI independence. This paper argues that the vast majority of these programs are solving the wrong problem. Nearly all national AI strategies focus on training sovereign foundation models – a one-time cost that, even at frontier scale, represents a fraction of the ongoing expense of inference – the compute required to serve every AI query, every day, to every user. OpenAI’s training cost for GPT-4 was approximately $78 million.

Its inference costs in 2025 were $8.4 billion – and are projected to reach $14.1 billion in 2026. A country that trains its own national LLM but runs it on

American cloud infrastructure has not achieved sovereignty. It has built a flag and planted it on rented land. We present evidence that true AI sovereignty is determined not by who trained the model but by who controls the inference infrastructure – and that the convergence of open-weight models to within 1-2 percentage points of frontier performance makes model training the least strategically important layer of the AI stack for sovereignty purposes. The nations that will control their AI destiny are not those building the most expensive models but those building the most efficient inference architectures.

1. The Sovereign AI Gold Rush

The concept of sovereign AI - a nation’s capacity to produce artificial intelligence using its own infrastructure, data, workforce, and business networks - has moved from policy papers to presidential agendas in under two years. In a November 2024 survey, roughly 40 government-backed sovereign AI projects were tracked across approximately 30 countries. By January 2026, that number had more than tripled to nearly 130 projects across more than 50 countries.

Figure 1. Government-backed sovereign AI projects tripled from ~40 to ~130 in 18 months, spanning 50+ countries. Source: Lawfare / CNAS Sovereign AI Index.

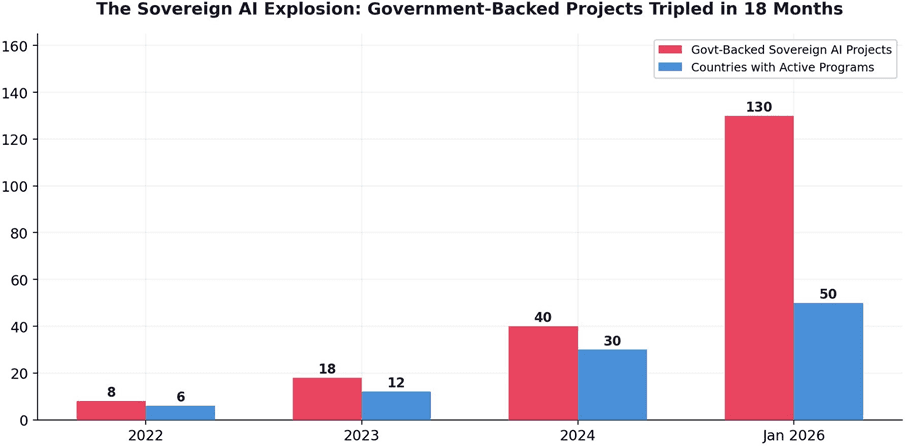

The investments are enormous. France has committed 109 billion euros to AI infrastructure. The UAE, Saudi Arabia, and South Korea have each pledged tens of billions. Global spending on sovereign AI systems is projected to surpass $100 billion by 2026.2 Germany launched SOOFI, an open-source foundation model initiative. Switzerland released Apertus. Poland built PLLuM. Ukraine is developing a national LLM on Google’s Gemma architecture.34

1Lawfare, "The Sovereignty Gap in U.S. AI Statecraft" (Feb 2026). ~130 sovereign AI projects across 50+ countries by Jan 2026.

2BCG, "For Most Countries, AI Sovereignty Is an Illusion. Resilience Is Real" (March 2026). 3Sacra, "OpenAI Revenue, Valuation & Funding" (April 2026). Inference costs: $8.4B (2025), projected $14.1B (2026).

4Epoch AI / AI Index, "The Rising Costs of Training Frontier AI Models" (2024). Training costs growing at 2.4x/year.

Figure 2. National sovereign AI investment commitments (2024-2028). France leads with €109B. Sources: government announcements; RAISE Summit; press reports.

The motivation is understandable. As the European Parliament acknowledged, the continent is "currently heavily dependent on foreign technologies" and this dependence "looks set to continue." Countries that remain AI clients risk what one analyst termed "digital neo-colonialism" - paying perpetual rent to the owners of the world’s most advanced intelligence engines. But the question this paper raises is not whether sovereign AI matters. It is whether sovereign AI programs are targeting the right layer of the stack.

2. The Misdirected Investment

The overwhelming majority of sovereign AI programs focus on one goal: training a national foundation model. This is the wrong target. Training a frontier LLM is a one-time cost. GPT-4’s training cost approximately $78 million. Gemini Ultra cost roughly

$191 million. Even Llama 3 at the high end was approximately $500 million. These are large numbers, but they are dwarfed by the cost of serving these models. OpenAI’s inference costs reached $8.4 billion in 2025 and are projected to rise to $14.1billionin2026-growingfasterthanrevenue.5

Figure 3. Training is a one-time expense; inference is a recurring, growing cost that dwarfs training by 100x annually. Sources: Sacra; Epoch AI; provider disclosures.

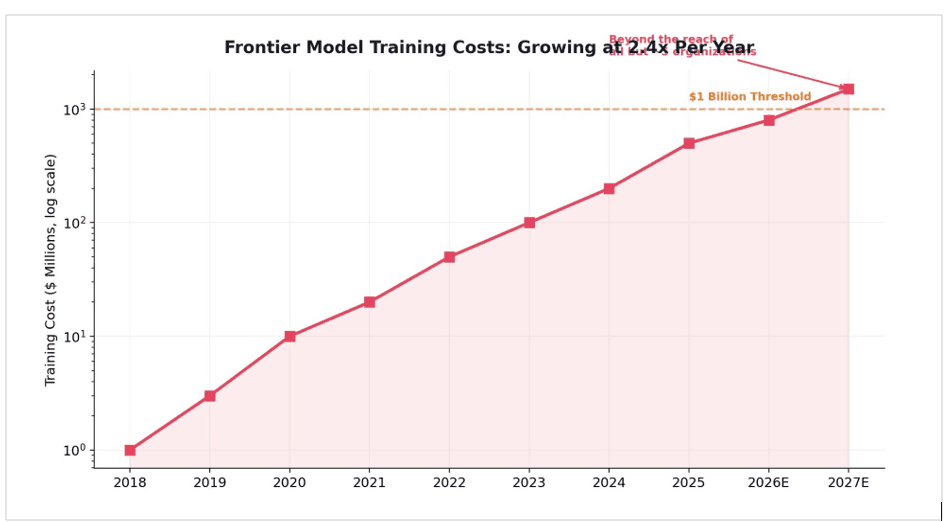

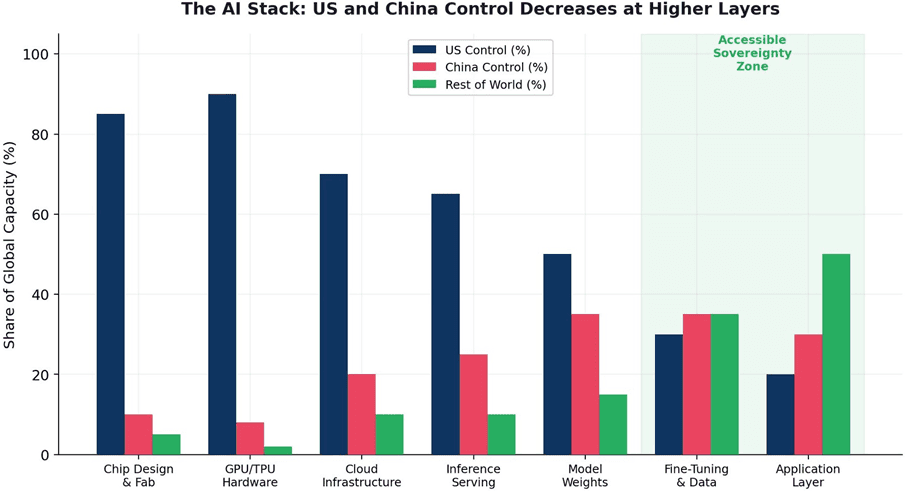

The cost of training frontier models is growing at approximately 2.4x per year.6 By 2027, the largest training runs will cost more than $1 billion, meaning only the most well-funded organizations on Earth will be capable of training from scratch. But even this alarming trajectory misses the deeper point: training is the wrong metric for sovereignty. A model, once trained, is a static artifact - a set of numerical weights that can be downloaded, copied, and deployed anywhere. Inference is the living, ongoing dependency. It is the compute that must be available every second of every day to serve every query from every user. It is where the real cost, the real dependency, and the real sovereignty question resides.

5RAISE Summit, "Sovereign AI: Why Nations are Treating Compute as Critical Infrastructure" (Feb 2026). Global sovereign AI spending projected >$100B.

6Euronews, "Which European Countries Are Building Their Own Sovereign AI" (Dec 2025). Germany (SOOFI), Switzerland (Apertus), Poland (PLLuM), Portugal (Amalia).

Figure 4. Frontier model training costs growing at 2.4x/year, crossing $1B by 2027. Only ~5 organizations worldwide can sustain this trajectory. Source: Epoch AI.

2.1 The Sovereignty Mismatch

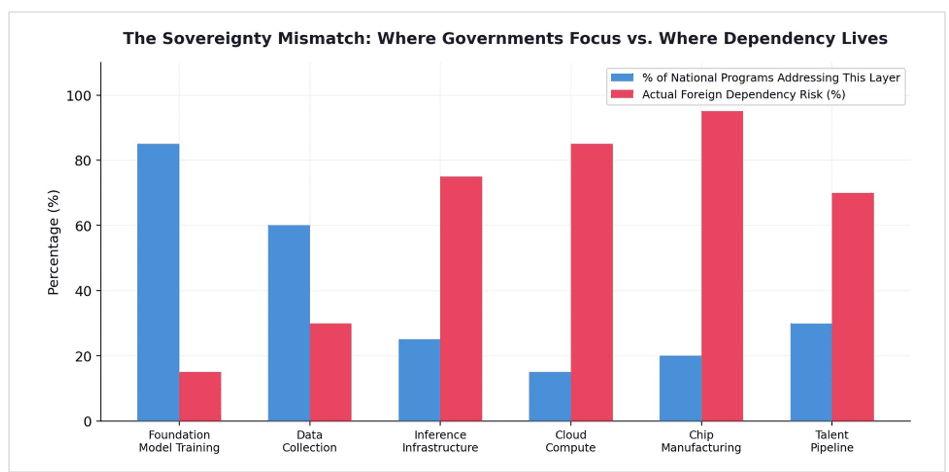

The mismatch between where sovereign AI programs invest and where actual foreign dependency exists is striking. An estimated 85% of national programs prioritize foundation model training. Yet the deepest foreign dependencies lie in inference infrastructure (75%), cloud compute (85%), and chip manufacturing (95%) - layers that most sovereign AI programs barely address.

Figure 5. The Sovereignty Mismatch. Governments focus on model training (85% of programs), while actualforeigndependencyisconcentratedininference,cloud,andchips.Sources:CNAS;BCG; author analysis.

As BCG concluded in a March 2026 report: "For most countries, AI sovereignty conceived as full-stack autarky remains an illusion." The resource intensity and rapid pace of AI development means that only a select few superpowers have the scale to sustain a sovereignty strategy across the full stack.

7Feenanoor, "The Rise of Sovereign AI: Why National Compute Defines 2026" (March 2026).

3. Sovereignty Theater: Three Case Studies

The gap between sovereign AI rhetoric and operational reality is best illustrated through specific examples. Many of the world’s most prominent "sovereign" AI models are, upon closer inspection, deeply dependent on American infrastructure at every layer.

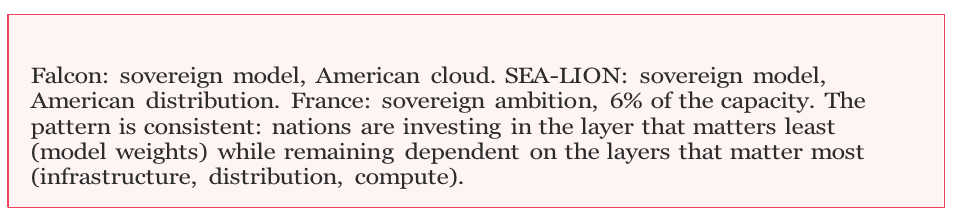

3.1 The UAE’s Falcon: Sovereign Model, American Cloud

The UAE’s Falcon LLM, developed by the Technology Innovation Institute in Abu Dhabi, was one of the first high-profile sovereign AI efforts. Falcon-40B briefly topped the Open LLM Leaderboard in 2023. But Falcon was trained on Amazon Web Services infrastructure - American cloud, American hardware, under American jurisdiction.8 If the US government were to restrict AWS access to the UAE - as it has restricted chip exports to other nations - Falcon’s entire training pipeline would be severed. The model weights themselves can be downloaded, but the operational capacity to retrain, update, or scale the model remains on rented American land.

3.2 Singapore’s SEA-LION: Sovereign Model, American Distribution

Singapore’s SEA-LION model, designed for Southeast Asian languages, depends on GitHub (owned by Microsoft), Hugging Face (US-headquartered), and soon IBM for distribution.9 The model itself may be trained on Singaporean priorities, but every layer of its distribution infrastructure is controlled by American corporations. This is not sovereignty. It is sovereignty of intent with dependency of operation.

3.3 France: Sovereign Ambition, 6% of the Capacity

France has committed €109 billion to AI and upgraded its Jean Zay supercomputer with 1,456 NVIDIA H100 GPUs. This sounds impressive until you learn that Microsoft alone intends to install 25,000 advanced GPUs in its French data centers by the end of 2025.10 France’s sovereign GPU capacity is approximately 6% of what a single American hyperscaler is deploying on French soil. The sovereign infrastructure is a rounding error next to the commercial infrastructure it seeks to replace.

8CNBC East Tech West, "Open-source models and cloud computing can help nations build sovereign AI" (July 2025).

9Bain & Company, "Sovereign AI Is the Next Fault Line in the Global Tech Sector" (2024). Training costs >$100M for frontier models.

10SIAI, "Sovereign AI Is Becoming Public Infrastructure" (Dec 2025). South Korea: 260,000 GPUs for sovereign clouds.

4. The NVIDIA Paradox: Sovereign AI’s Biggest Winner Is a Single American Company

There is a deep irony at the heart of the sovereign AI movement. The single largest commercial beneficiary of dozens of nations spending tens of billions of dollars to reduce their dependence on American technology is an American company: NVIDIA. In fiscal year 2026, NVIDIA’s sovereign AI revenue tripled to over $30 billion, now accounting for roughly 14% of total revenue.11

Jensen Huang has been pitching the concept of sovereign AI since at least 2023. As Cambridge University’s NLP journal noted, the pitch is "somewhat self-serving: when the UK does get around to building out that infrastructure, it’s certain to consist largely of chips sold by Huang’s company."12 Nations are treating AI compute as a national utility - and purchasing that utility almost exclusively from a single American vendor. Once national AI systems are built on NVIDIA’s CUDA software stack, switching to alternatives requires rewriting large amounts of code, creating strong lock-in.

The sovereign AI movement, as currently constituted, does not reduce dependence on American technology. It deepens it. It shifts the dependency from a subscription to OpenAI’s API ($20/month, cancelable anytime) to a multi-billion-dollar infrastructure investment in NVIDIA hardware (decade-long commitment, enormous switching costs). The irony is precise: nations seeking AI independence are locking themselves into the deepest, most expensive form of dependency on a single US supplier.

11Galileo, "How Much Does LLM Training Cost?" (Feb 2026). Human data annotation exceeds compute costs by up to 28x.

12CFA Institute, "AI Strategy After the LLM Boom: Maintain Sovereignty, Avoid Capture" (Jan 2026). Yann LeCun testimony.

5. The Open-Source Convergence

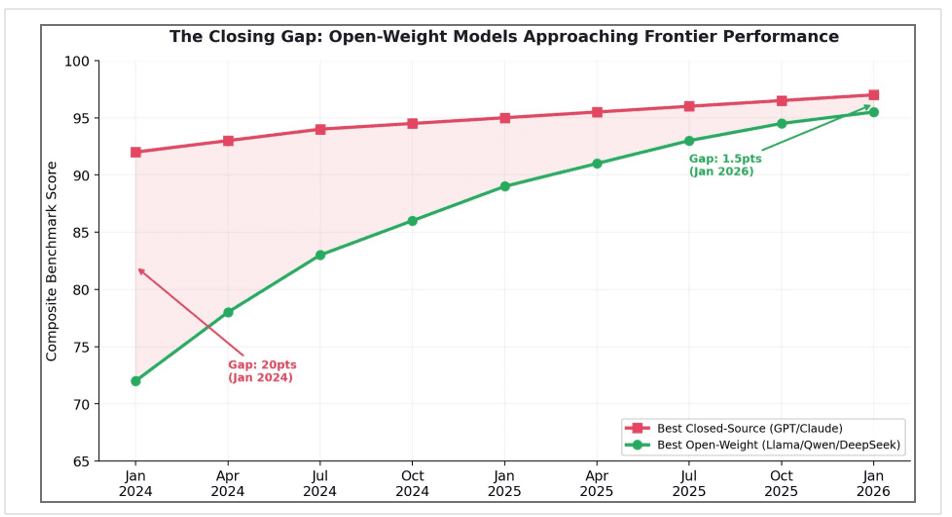

The strongest argument against investing billions in national model training is that the models themselves are converging toward commodity status. The performance gap between the best closed-source and the best open-weight models has collapsed fromapproximately20percentagepointsinJanuary2024tojust1-2pointsbyearly 2026. On MMLU, the gap narrowed from 17.5 to just 0.3 percentage points in a single year.13Models like Llama, Qwen, and DeepSeek now compete at or near frontier performance on most benchmarks, and they are freely available for anyone to download and deploy.

Figure 6. Open-weight models have closed a 20-point gap to within 1.5 points of frontier closed-source models in two years. Sources: Chatbot Arena; LMSYS; Swfte AI.

This convergence fundamentally changes the sovereignty calculus. If a country’s goal is to have access to a capable LLM that is not controlled by a foreign company, it does not need to spend hundreds of millions training one from scratch. It can download Llama 4 or Qwen 3, fine-tune it on domestic data for a few million dollars, and deploy it on domestic infrastructure. The model is not the scarce resource. The infrastructure to run it is.

As panelists at CNBC’s East Tech West conference noted, open-source AI has helped multiple nations boost AI adoption and better develop their ecosystems.

13Arxiv, "Buy vs Build an LLM: A Decision Framework for Governments" (Feb 2026). NUS/Singapore framework.

14Digital State UA, "Ukraine Moves Toward a Sovereign AI Model" (Jan 2026). National LLM beta spring 2026.

ASEAN region, with nearly 700 million people and 125,000 new internet users daily, is particularly positioned to leverage this approach.

6. The DeepSeek Proof: Sovereignty for $6 Million

In January 2025, a laboratory in Hangzhou, China, released a model that sent shockwaves through Silicon Valley and rendered much of the sovereign AI debate obsolete in a single stroke. DeepSeek-R1 matched the reasoning capabilities of OpenAI’s top-tier models for a reported training cost of approximately $6 million - a fraction of the $100 million+ spent by Western rivals.15 The subsequent DeepSeek-V3, a 671-billion-parameter Mixture-of-Experts model, was trained for $5.6 million and achieved performance parity with GPT-4o.

The significance for sovereign AI is profound. DeepSeek proved that "sovereign AI is possible for medium-sized nations and corporations that cannot afford the $10 billion clusters." It shifted the strategic competition from "who has the most GPUs" to "who has the most efficient architecture." And it achieved all of this while operating under American semiconductor export restrictions - using H800 chips specifically designed to be less powerful than the H100s available to Western labs.16

As the New America Foundation observed at the Paris AI Summit: "DeepSeek’s achievement suggests a different path - one that relies less on premium hardware and more on innovative engineering." Jensen Huang had been touring globally promoting sovereign AI, assuring leaders it was "not that costly" while positioning NVIDIA’s expensive GPUs as essential infrastructure. DeepSeek proved there was a cheaper way.17

15Arcade.dev, "Why AI Inference Is Underpriced" (Dec 2025). Anthropic burns 70% of every dollar. OpenAI loses money on $200/mo Pro.

16Yahoo Finance / The Information (Jan 2026). OpenAI projects $14B loss in 2026; cumulative losses 2023-2028: $44B.

17Lawfare, "Sovereign AI in a Hybrid World" (Nov 2024). Falcon trained on AWS; SEA-LION depends on GitHub/Hugging Face/IBM; France Jean Zay = 6% of Microsoft’s French GPU deployment.

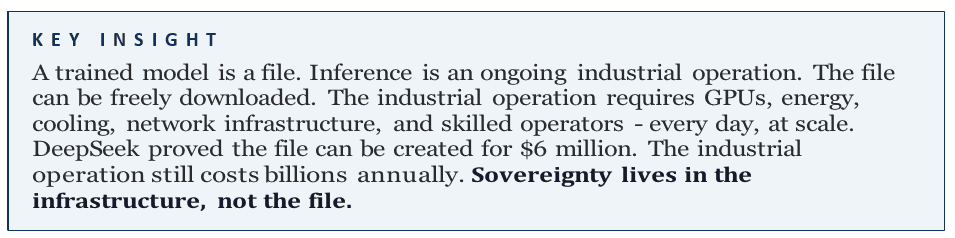

7. The Sovereignty-Cost Matrix

Not all paths to AI sovereignty are equal. When we map approaches on two dimensions - actual sovereignty achieved and cost incurred - a striking pattern emerges. The approach that most national programs choose (training a national frontiermodelonforeigncloudinfrastructure)sitsintheworstquadrant:highcost, lowactualsovereignty.Theapproachthatdeliversthehighestsovereigntyperdollar invested - ensemble routing of open-weight models on domestic inference infrastructure - is the one almost no national program is pursuing.

Figure 9. The Sovereignty-Cost Matrix. Training a national model on foreign cloud (leftmost) achieves just 20% sovereignty at near-maximum cost. Ensemble routing on domestic infrastructure achieves 80% at 15% of the cost. Source: author framework.

The logic is straightforward. A country that trains a 70-billion-parameter model at great expense but hosts it on AWS or Azure has achieved model sovereignty but not operational sovereignty. The moment AWS decides to restrict service, raise prices, or comply with a foreign government’s sanctions, that nation’s "sovereign" AI goes dark. Conversely, a country that takes freely available open-weight models, fine-tunes them on local data, and runs them on domestically owned GPU clusters has achieved something far more durable: control over the ongoing operation of its AI systems.

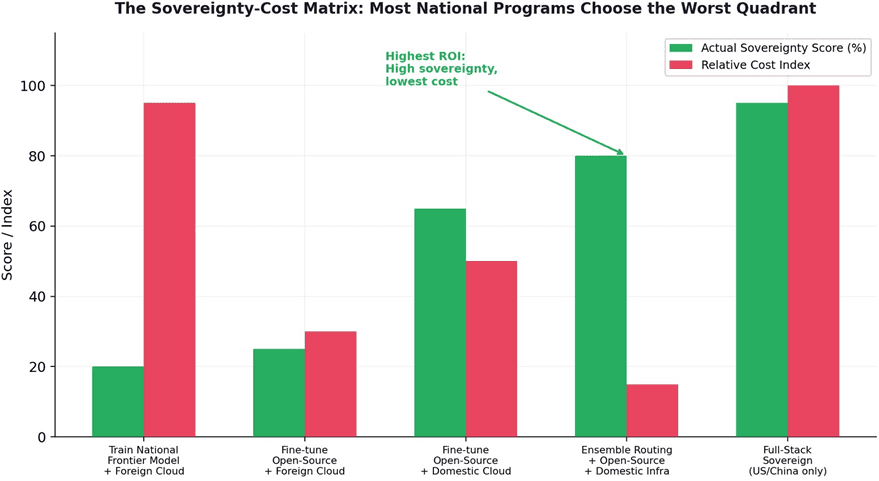

8. The AI Stack: Where Sovereignty Is Actually Achievable

The AI stack is not equally foreign-controlled at every layer. At the bottom - chip design and fabrication - US dominance is near-total (85%+), and no amount of national investment will change this in the medium term. But sovereignty does not require controlling every layer. It requires controlling the layers that determine operational independence.

Figure 10. US and China control over the AI stack decreases at higher layers. The "Accessible Sovereignty Zone" - fine-tuning, data, and application layers - is where emerging nations can achieve meaningful independence. Source: author analysis.

The insight is that sovereignty is achievable at the upper layers of the stack without controlling the lower layers. A country cannot fabricate its own GPUs (except the US, China, and Taiwan). But it can build or lease domestic GPU clusters, deploy open-weight models, fine-tune them on domestic data, and serve inference domestically. This "inference sovereignty" provides 80% of the practical independence that "full-stack sovereignty" provides, at a fraction of the cost.

Yann LeCun’s testimony to European policymakers reinforced this point: the immediate AI risk is not runaway general intelligence but "the capture of information and economic value within proprietary, cross-border systems." Sovereignty, at both state and firm level, is central.18 The question is what form of sovereignty is achievable and cost-effective.

18Trefis, "Can Sovereign AI Buffer Nvidia?" (March 2026). NVIDIA sovereign AI revenue tripled to

$30B in FY2026, 14% of total revenue.

9. Implications: From Model Sovereignty to Inference Sovereignty

9.1 Redefining the Goal

National AI strategies should be reoriented around three pillars: domestic inference infrastructure (GPU clusters, energy, cooling), an open-weight model ecosystem (fine-tuning, domain adaptation, multilingual optimization ); and intelligent orchestration (ensemble routing that delivers frontier-quality output at emerging-market cost structures). This triad achieves the practical objectives of sovereignty - data residency, operational independence, cultural and linguistic alignment - without the prohibitive cost of training frontier models from scratch.

9.2 The Multilingual Imperative

For nations pursuing inference sovereignty, the multilingual dimension is critical. Frontier models trained primarily on English data perform poorly on low-resource languages. National programs that fine-tune open-weight models on domestic linguistic corpora - Hindi, Arabic, Bahasa, Swahili, Ukrainian, Portuguese - achieve both sovereignty and capability superiority in their own languages. This is not a consolation prize. For domestic users, a model that speaks their language fluently is more valuable than a frontier model that does not.

9.3 Compute Alliances

Mid-sized economies are beginning to pool resources into "Compute Alliances" - regional GPU clusters that provide shared inference capacity across borders while maintaining data residency within each participating nation.19 The EU’s EuroHPC AI Factories initiative, Latin America’s Latam-GPT consortium, and ASEAN regional compute partnerships represent early examples. These alliances recognize that the unit economics of inference require scale that most individual nations cannot achieve alone - but that regional cooperation can provide without surrendering sovereignty to a foreign hyperscaler.

10. Conclusion

The sovereign AI gold rush of 2024-2026 will be remembered as one of the largest misdirected investments in technology history. Dozens of countries are spending billions to train national foundation models that will be obsolete within 18 months,

19Cambridge University NLP Journal, "Sovereign AI in 2025" (Aug 2025). Jensen Huang criticism of UK "self-serving: infrastructure will consist largely of chips sold by Huang’s company." While the open-weight ecosystem produces comparable models for free - and DeepSeek proved it can be done for $6 million. Meanwhile, the single largest beneficiary of "sovereign AI" spending is NVIDIA, a single American company that tripled its sovereign revenue to $30 billion in one year. Nations seeking AI independence are locking themselves into the deepest, most expensive form of dependency on a single US supplier.

As the New America Foundation warned: "Without a voice in shaping AI governance, developing countries risk being trapped in a cycle of technological dependence, forced to adopt tools and policies ill-suited to their local circumstances - or to embrace corporate visions of sovereignty that may serve shareholders more than citizens."20

True AI sovereignty is not determined by whether a nation trained its own model. It is determined by whether a nation controls the infrastructure that serves AI to its citizens every day - the GPUs, the energy, the data centers, and the orchestration software that routes each query to the right model at the right cost. This is inference sovereignty, and it is simultaneously cheaper to achieve, more durable to maintain, and more strategically consequential than model sovereignty.

The nations that will control their AI destiny in the coming decade are not those building the most expensive models. They are those building the most efficient, domestically controlled inference architectures - running open-weight models fine-tuned on local data, orchestrated by intelligent routing systems that deliver frontier-quality output at the cost structures their economies can sustain. The flag matters less than the land it stands on.

About Redrob Labs

Redrob (redrob.io) builds large language models and AI tools for the next three billion users. Founded in 2018, the company operates a proprietary multi-model ensemble architecture delivering 90% of frontier performance at 5% of the cost - purpose-built for the unit economics of India and emerging markets. Headquartered in New York with offices in Seoul, New Delhi, and Mumbai. Backed by world-class VC firms.

Redrob Labs is the research division of Redrob. Our work is published at redrob.io/research.

20Swfte AI, "Open Source AI Models: Why 2026 Is the Year They Rival Proprietary Giants" (Jan 2026). MMLU gap narrowed from 17.5 to 0.3 points.